Autonomous Collaborative 3D Multi-Modal Mapping System

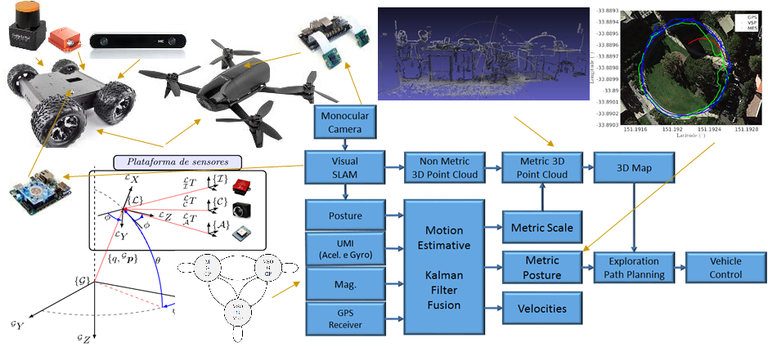

This research project proposes the study and design of an autonomous 3D mapping system based on Visual SLAM using collaborative aerial and ground robots, see the figure below.

Achieving this goal involves pushing the state-of-the-art in several different robotic systems, such as control, localization, mapping, sensor modeling/calibration, path planning, and others, which will be used in combination to produce a fully functional framework for end-to-end environment exploration and information retrieval.

The robot control system will be based on dynamic models written in state space form, following the latest advancements achieved by researchers involved in this project. For the attenuation of external disturbances and parametric uncertainties, adaptive nonlinear H Infinity control techniques will be modeled based on Deep Learning and Gaussian Process models, to produce a hybrid semi-parametric approach to state estimation and drift management. Recursive robust control approaches subject to parametric uncertainties will also be evaluated.

Visual sensors will be the primary source of information for mapping and localization, since they are able to produce rich and dense representations of the environment, including color and texture. This information will be processed using Visual SLAM techniques, following recent advancements achieved by researchers involved in this project and focusing on real-time feature extraction, matching, and reconstruction, including data gaps and partially observed structures. To ensure a proper metric scale estimation, SLAM estimates will be fused with the vehicle's odometry data through robust estimators based on Markovian Jump Linear Systems (MJLS). This system will model the modes of operation of the navigation system based on the availability and reliability of each sensor, thus ensuring a much more robust model that is capable of adapting to failures and recover from otherwise catastrophic circumstances. Mapping will be done based on point cloud information provided by the SLAM algorithm, which will be used to produce an occupancy model of the environment according to the Hilbert Maps framework, a novel scene reconstruction technique proposed by researchers involved in this project and that has been shown to achieve unprecedented results in efficient 3D occupancy modeling.

Once the occupancy model is complete, we can use it to produce optimal obstacle-free trajectories for autonomous exploration, that are constantly adapting to the flow of new information. Algorithms such as Rapidly-exploring random tree (RRT) and path planning methods based on constrained Bayesian optimization will be used to produce optimal trajectories according to arbitrarily defined behavioral functions, that guide the vehicle during its mission to achieve its goal. Furthermore, this will be done on a multi-vehicle regimen, so each individual has to be aware of its role and its companions' in order to avoid wasteful motion and harmful decisions. The use of multi-robots is justified by the possibility of accomplishing exploration tasks in a shorter period of time, with higher reliability and using vehicles with different abilities of perception and locomotion, that can reach areas that others can not due to physical limitations.

For project design and experimental validation, commercial aerial vehicles such as the Parrot Bebop 2 will be used in conjunction with terrestrial vehicles such as the Lynxmotion A4WD1 mobile robot and other platforms already available in our research premises. However, the framework will be modular by design, so different vehicles can be added with minimal effort, thus allowing the unlimited growth of this sensor network as more hardware is incorporated to it. We foresee numerous applications for this proposed framework, both for civilian society and also for military use, by providing means to autonomously survey unknown areas and extract information for reconnaissance that can be readily used to produce optimal strategies to achieve a certain goal.